Photo by Raychel Sanner on Unsplash

In part 1 of this series, I explained Azure bandwidth charges and identified the data transfer costs of different scenarios and network topologies. This blog will focus on design patterns to follow when building solutions in Azure, by keeping in mind the cost of bandwidth. It will also go into how customers in Azure can optimize those costs by leveraging Pure Cloud Block Store™ to minimize network utilization and reduce the overlooked Azure data transfer costs.

I will start with what I would call – Design Patterns. A set of the best practices you can use to optimize Azure bandwidth. By following these patterns, you can improve the performance and reliability of your applications while reducing your bandwidth costs. I am categorizing them into two sections: Pre Deployments and Post Deployments.

Design Patterns: Pre-Deployment

Determine the Type of Egress. Is the data egressing to the public internet or to another Azure region? Egress to the public internet is usually more expensive than Zone-to-Zone transfers. Refer to the previous blog post.

Choose the right Azure region. Azure bandwidth pricing varies by region, and selecting the region would rely on multiple factor, to name few, the locality to business operation proximity to end user, egress destination, regulation and compliances.

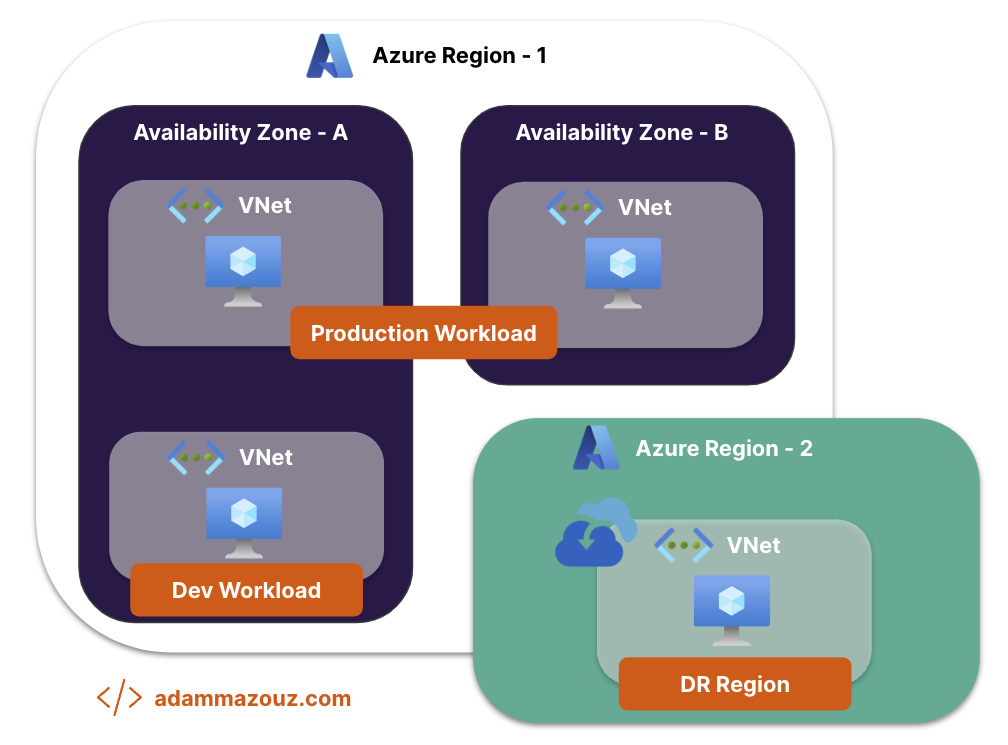

Workload Type. Each type of workload or application has different compliance and service agreement requirement. For production workloads, the resource might be deployed in a high availability configuration and data has to flow cross zones or regions. This is not the case for most of development workload, where the resources might be deployed in a single zone, where redundancy and resiliency are not needed.

Draw Network Topology. Before deploying any resources, start by planning the network topology. Draw vNets, regions, and availability zones. Which resource would need public internet, and which will route internally.

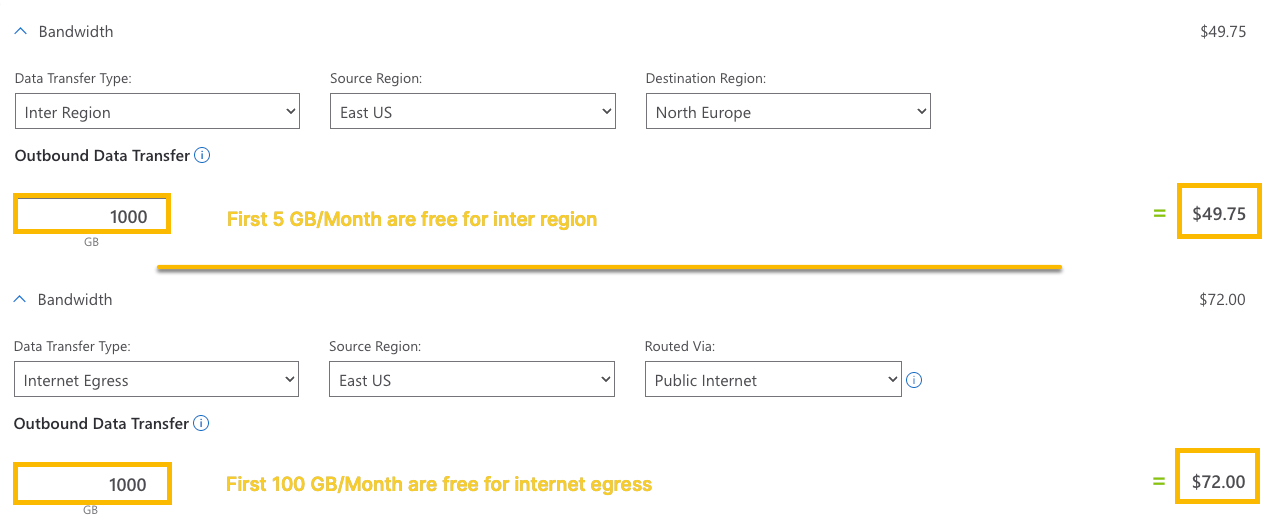

Looking up costs using the Azure Pricing Calculator. Azure provides a pricing calculator tool that you can use to estimate the cost based on your specific requirements. Check the example below for Virtual Machine Bandwidth Calculation.

Consider using ExpressRoute. If your workload is generating a lot of egress traffic or data transfers are flowing in or out Azure from on-premises on regular basis and the amount of data is known and speed of data transfer required is measured. Azure ExpressRoute is the go to solution, which provides a dedicated private connection between your on-premises network and Azure, with fixed plans and meter options.

Consider using ExpressRoute. If your workload is generating a lot of egress traffic or data transfers are flowing in or out Azure from on-premises on regular basis and the amount of data is known and speed of data transfer required is measured. Azure ExpressRoute is the go to solution, which provides a dedicated private connection between your on-premises network and Azure, with fixed plans and meter options.

Design Patterns: Post Deployment

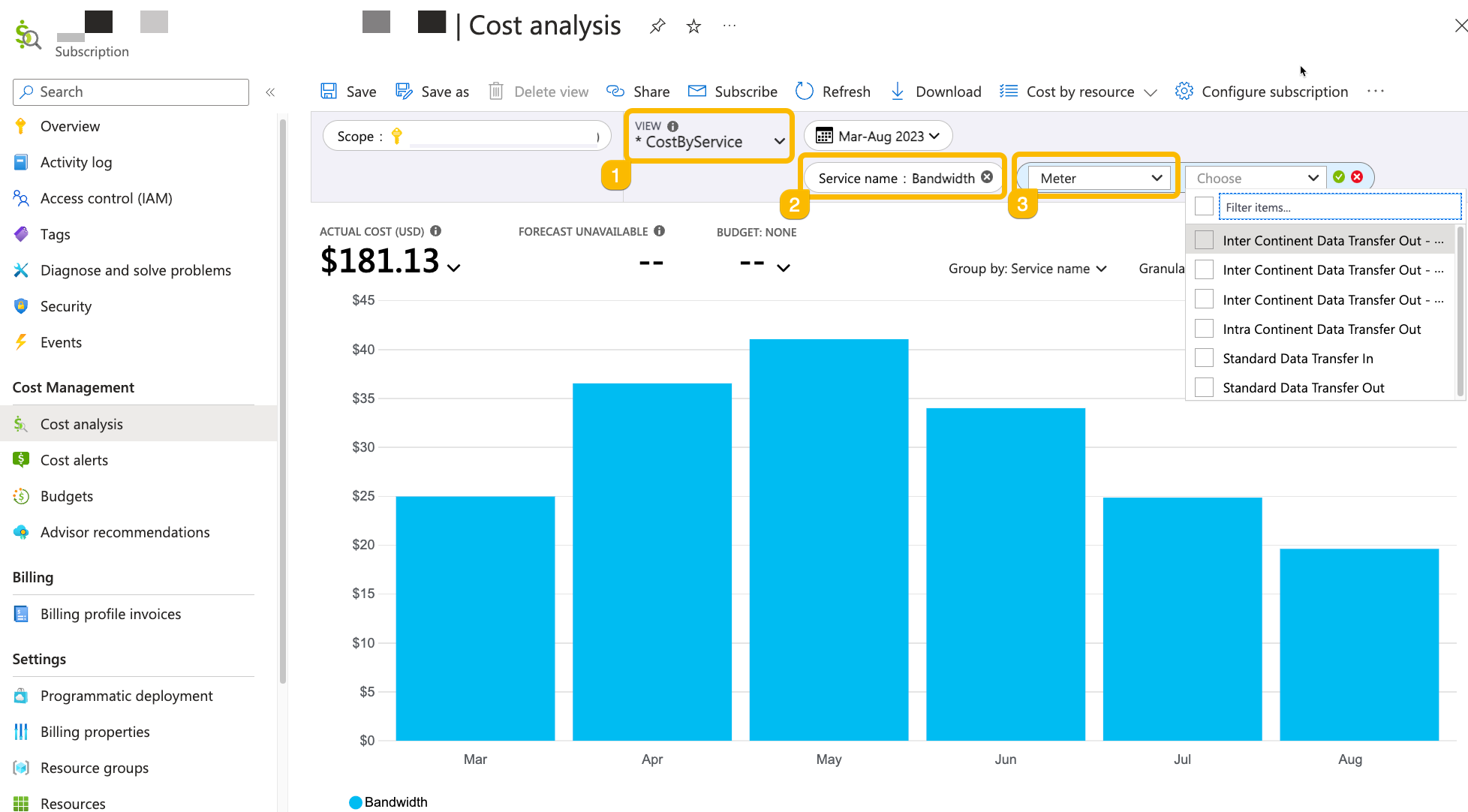

Cost Management. The first place to start with would be the Azure Cost Management tool. Once you apply the scope, you can monitor, analyze, and optimize spending on Azure resources, including bandwidth costs.

Set Alerts. Budgets and spending alerts can be set up as well to receive notifications when bandwidth costs approach predefined limits in a region or a certain resource group.

Configure Monitoring. Proactive monitoring is the ultimate solution for not getting surprise when the bill hits the mailbox. Leverage Azure Monitor to create custom monitoring dashboards to track data transfer metrics and identify potential cost optimization opportunities.

Optimize Azure Bandwidth with Pure Cloud Block Store

If you are familiar with Pure Storage FlashArray, you would recognize “Purity”, the software or operating environment that provides all forms of data efficiencies and the enterprise grade features. Pure Cloud Block Store is powered with the same operating environment, extending those features to the cloud. But how can Pure Cloud Block Store optimize Azure bandwidth cost?

Minimize Network Utilization

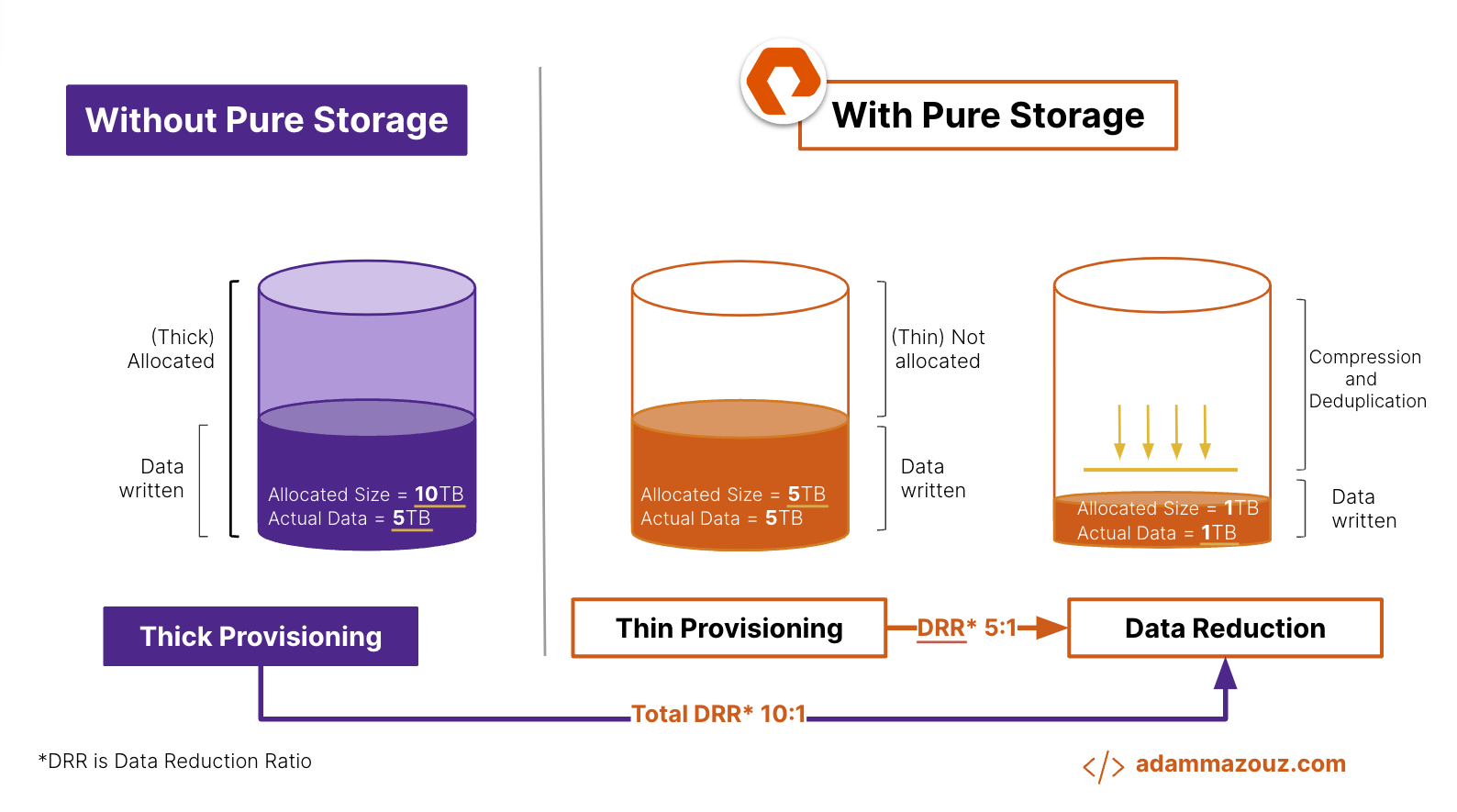

By default, Pure Cloud Block Store employs advanced data reduction techniques such as deduplication and compression. This significantly reduces the amount of data that needs to be stored in the cloud, Additionally, Pure Cloud Block Store preserves compression and deduplication when data is replicated over the network, resulting in bandwidth optimization and cost savings.

Furthermore, when data volume is replicated from one CBS instance or FlashArray to another, the initial copy contains the entire dataset, while subsequent copies exclusively include incremental changes, or deltas. This provides efficient data replication with low bandwidth utilization.

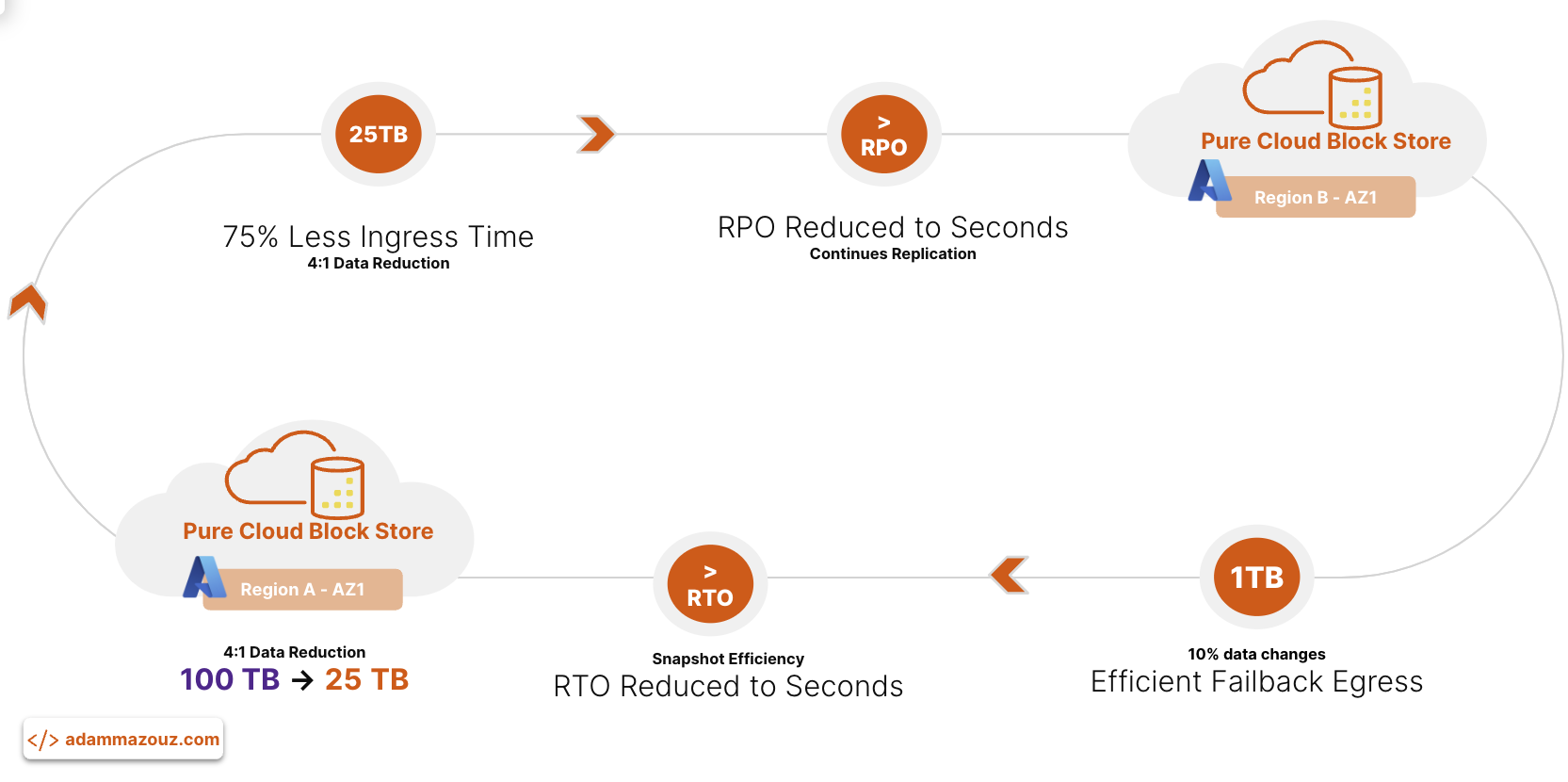

Efficient Failover/Failback

Pure Cloud Block Store offers efficient data replication capabilities - async and synchronous - that ensure data availability and disaster recovery while minimizing bandwidth usage. With data reduction preservation, it takes less time to replicate data, and less data overall which is a huge factor, especially when ingress charges are a factor. This reduces both cost and recovery point objective (RPO) down to seconds.

Wait a minute! what about sending data back or “failover”. Pure Cloud Block Store replication connections has data awareness. If I would replicate the same data volumes back (25 TB from the diagram example above) to the source Pure Cloud Block Store (or to FlashArray on-premises), only data changes (for 10% data change would be 1 TB) in the target would be replicated. In other words, No byte would be sent twice.

Further details and cost analysis can be found in this knowledge base.

Real-time Analytics with Pure1®

If I am talking real-time insights and optimization. I won’t miss the chance to highlight Pure1. Pure1 is an AIOps platform that gives you a single interface to manage all your storage arrays. The cloud service provides critical insight into your technology stack. Moreover, it provides real-time insights into your storage performance and data reduction statistics. I will be covering more about Pure1 in a follow up blogs.

Conclusion

Whether you will be using Azure Cloud for production, development, or disaster recover, you have to start with design patterns optimization techniques. But getting it all packaged in one data solution as in Pure Cloud Block Store might be the right optimization solution, where you can reduce both the storage footprint and data transfer costs. Making the overlooked cost the does matter – diminished.

If I missed a pattern or a best practice you think Azure customer has to follow and adopt put it down in the comment section below or reach out to chat and exchange ideas and thoughts. Happy reading …

comments powered by Disqus